When AI Rewrites History: A Global Threat to Truth

In every conflict, truth is fragile. In the age of artificial intelligence, history itself is under threat.

The war in Gaza has exposed something far more dangerous than misinformation or biased reporting: the rise of AI-generated images presented as historical reality. These images are not merely misleading. They actively reshape memory, manipulate emotion, and distort the historical record—not only about Israel, but about conflicts and events worldwide.

This is not a local issue. It is a global historical crisis.

The Power and Peril of Images

Humans instinctively trust images more than words. A photograph feels like proof. It bypasses analysis and goes straight to emotion. This psychological shortcut worked reasonably well in the age of traditional photography, where images had traceable origins, photographers, dates, and context.

AI has shattered that framework.

Today, an image can be generated with no camera, no location, no timestamp, and no witness, yet it looks as real as any war photograph. When such images circulate online without clear labeling, they acquire false authority. Viewers do not ask “Is this real?” They ask “How could this happen?”

That is the danger.

Gaza: Where AI Meets Narrative Warfare

The Gaza war has become one of the most visually weaponized conflicts in modern history. Alongside real footage and authentic journalism, there is now a flood of AI-generated imagery depicting scenes of devastation, suffering, and alleged atrocities.

Some of these images are shared carelessly. Others are shared deliberately to inflame outrage, assign blame, and delegitimize Israel before facts can be established.

Israel is judged not on verified actions, military law, or documented evidence, but on synthetic visuals designed to provoke emotional certainty.

This does not deny civilian suffering. Civilians suffer in every war. But when suffering is artificially constructed or exaggerated through AI, it ceases to be testimony and becomes propaganda.

From Illustration to False Memory

The greatest danger of AI imagery is not immediate outrage. It is manufactured memory.

When people repeatedly see AI-generated images associated with a real conflict, those images become part of their mental archive. Over time, viewers begin to remember events they never witnessed. Fiction replaces uncertainty. Nuance disappears.

This is how false history is born.

In a few years, people will recall “images from Gaza” without knowing which were real and which were invented. The line between documentation and imagination dissolves and once that happens, correcting the record becomes nearly impossible.

A Worldwide Problem, Not an Israeli One

While Israel is uniquely targeted by narrative warfare, this threat is global.

AI-generated historical imagery is already appearing in:

The war in Ukraine

Conflicts in Syria, Yemen, and Sudan

Alleged massacres in Africa and Asia

Reimagined scenes of colonialism and slavery

Protest movements and civil unrest across the West

In each case, AI is used to simplify complex realities into emotionally charged morality plays. Context is erased. Responsibility is assigned visually, not factually.

History becomes aesthetic ideology.

Bias Built Into the Machine

AI does not generate images in a vacuum. It is trained on existing datasets, datasets shaped by political bias, cultural framing, selective documentation, and dominant narratives.

When AI generates “historical” images, it often:

Reinforces stereotypes

Centers certain groups while erasing others

Reflects ideological assumptions rather than evidence

This is especially dangerous for conflicts involving Jews and Israel, where visual tropes have historically been used to demonize and dehumanize.

Technology does not eliminate bias. It automates it.

The Collapse of Historical Standards

Real historical inquiry depends on rigor:

Primary sources

Verifiable provenance

Context and corroboration

AI-generated images have none of these.

They have no photographer.

No archive.

No chain of custody.

No accountability.

When such images are treated as evidence, or even as “illustrative truth”, they erode trust in all documentation, including real photographs and authentic archives.

Ironically, this benefits extremists and denialists most. When everything can be fake, nothing needs to be proven.

Ethical Lines We Should Not Cross

There is also a moral dimension.

Generating realistic images of:

Dead children

Grieving families

Civilian devastation

raises serious ethical questions. Trauma should not be simulated for engagement. Victims should not be digitally staged to serve political agendas.

War is not a prompt.

Suffering is not content.

Why This Matters Profoundly for Israel

Israel is not only defending itself militarily. It is defending:

Its legitimacy

Its history

Its moral standing

AI-generated visuals threaten to replace evidence with emotion, law with outrage, and history with illusion. When false imagery becomes accepted truth, international discourse collapses into spectacle.

A democracy cannot be judged by fiction.

A Necessary Standard for the Future

AI-generated images must never be presented as historical evidence.

If used at all, they must be:

Clearly labeled as AI-generated

Used only for abstract illustration

Never depicting real individuals or alleged crimes

Always accompanied by verified sources

Anything less is not education, it is deception.

History belongs to evidence, not algorithms.

If we allow AI to overwrite memory, we will not only lose Israel’s story, we will lose the possibility of truth in any conflict, anywhere in the world.

When images lie, memory follows.

And when memory is corrupted, justice becomes impossible.

Related Articles

Fear

For years, Israelis have lived with a kind of fear that is difficult to fully grasp from a distance.

War, Law, and the False Genocide Claim

The public debate is loud and often absolutist. The professional military assessment is quieter and more precise. It does not deny the brutality of war. It does, however, challenge the claim that this particular war meets the definition of genocide.

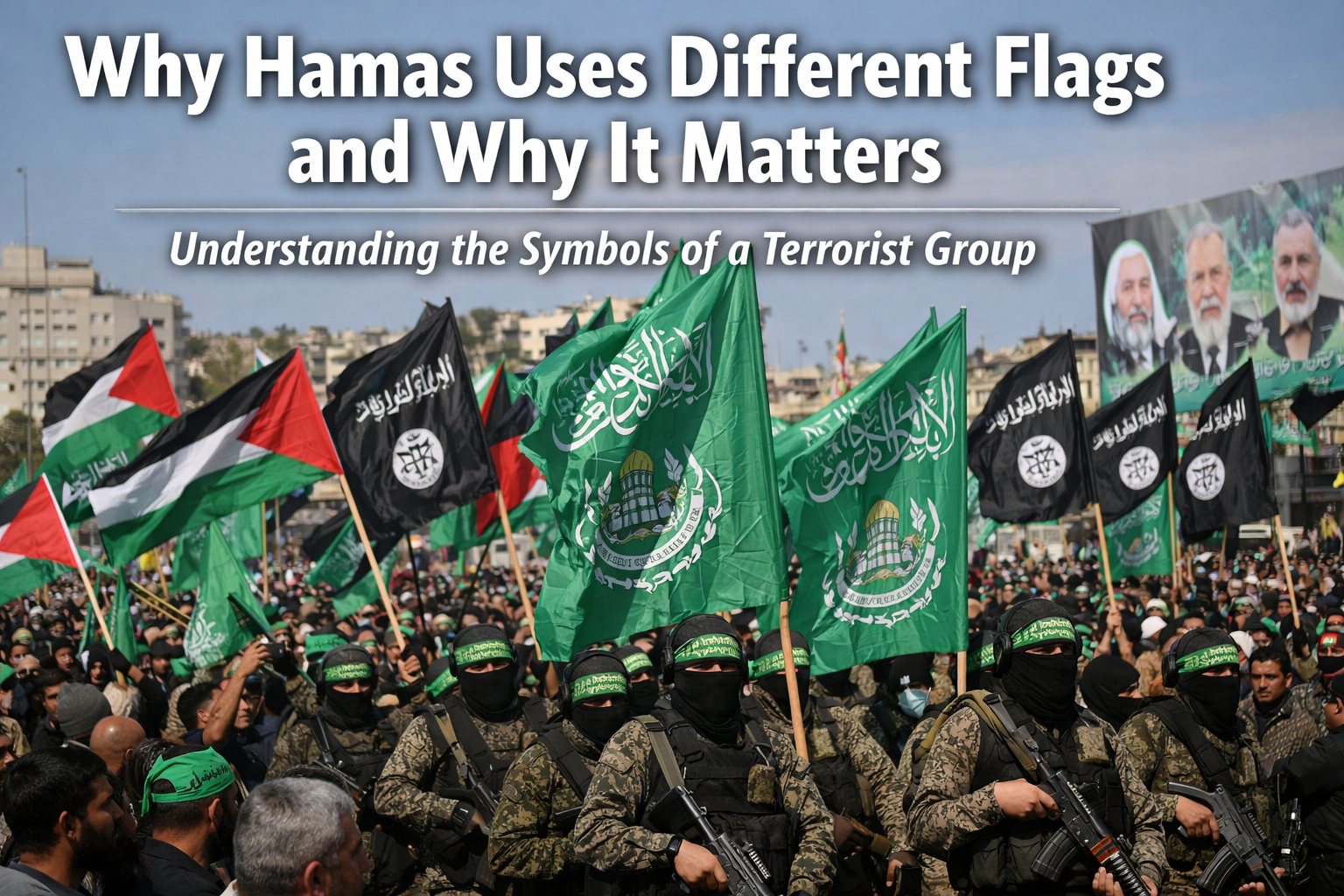

Why Hamas Uses Different Flags and Why It Matters

Understanding the symbolism behind flags used by Hamas is essential to grasp how the group presents itself to different audiences and why this creates concern, especially from a pro Israel perspective.